Recently we started working on a project which involves an existing database stored safely in a Microsoft SQL Server. I knew that Sybase is the precessor of M$SQL but that is roughly where my deep knowledge on this particular piece of software ended.

To get a good idea on what is in there and how we can mould it into something new and existing by exporting it to a Graph database I needed access beyond the existing web-interface.

The Microsoft Management Studio Express was recommended to me. For both. Exploring and exporting. Most of my as a developer I spent in Unix and most of the time I use a Mac as a workstation. I keep a Windows VM around for incidental testing in Explorer and such.

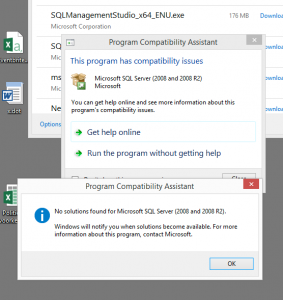

Installing “The SQL Management Studio” turned out to be the first challenge. Googling for it gives many results, all lead to download pages for various versions. Turns out that a Windows 8.1 install was not able to run a version of software from 2008.

After finding out that there is actual a newer version, from 2014 I found myself installing a complete local version of MS SQL server as I downloaded the wrong installer.

Apperently I am not the only one, just after finding the right download I stumbled upon this helpful external site which helps you finding the right download on Microsofts’ site…

Funny stuff: http://www.hanselman.com/blog/DownloadSQLServerExpress.aspx

Logging in the external database and explorer all the tables goes very smooth. All tables can be easily examined and a (mental) picture of the system starts to draw itself in my mind.

Lets try some exporting! The tool has various options. The “do a query and save it as CSV” seems the least intrusive and save way to work with this live system. The interface gives an option to select “the first 1000 rows” of a table and shows the query. This can be easily changed to SELECT * FROM table and the results can be saved into a CSV.

Nice.

I quickly start exporting various list-like tables, they all contain lists-of-options and are typically connected as a foreign key property to the real data.

Exporting these and importing those in Neo4J gives a good idea on the steps to do. Or so I thought. The lists export easily and the first few files are transferred.

Now I know that Neo is very capable of importing CSV directly but I won’t try it in this case. The reasons for that are various but mainly because I anticipate conversion and preprocessing the data before putting it in the graph.

So I decide to do all the work in Python; with its CSV module it can everything I need, read, convert and insert directly via the py2neo module.

The first thing I notice is that csv.Dictreader picks up some garbage for the first label in the file. It turns out to be a UTF-8 BOM written by the SQL export. Nice, so the tool exports UTF-8; that would be very good.

<EF><BB><BF> ; your friendly BOM.

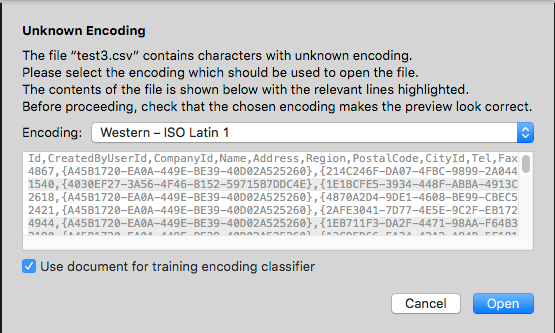

As those BOMs can be a real pain I decide to remove them and store the files as UTF-8 without a BOM. To my surprise I get this message:

Ah the warm fuzzy Microsoft feeling! Of course lets create files with a UTF-8 BOM which actually are standaard ISO 8859-1 encoded and NOT in UTF-8. Sigh..

After finding this I also noticed that the files have no string escaping and some fields contain a , as data. This completely breaks the CSV parsing. In order to get at least some data in I decide to hand-edit those few records for now.

A few hurdles, but the lists are in Neo4J and we can load some real data now and connect them to the rights lists!

Back to the SQL Management tool and export the big tables. They go very smooth and it is only after transferring them to the development server that I notice their size. It is all text but the files are well below 1 Mb.

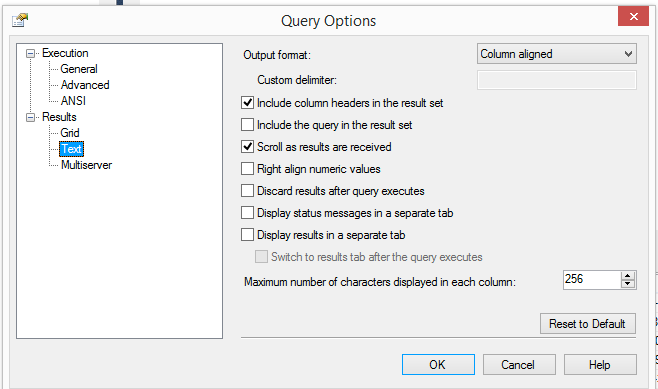

During the exports I had to go to a options screen to select CSV all the time, once for every table I wanted to export. In that screen I noticed a funny option, and suddenly I connected that option to the filesize.

Yes, longtext fields containing several pages of text are truncated to 256 chars.

Baffled I realised that using the “Management Studio Express” was not a viable export option.

Googling this unknown territory I read about people doing queries in the Tool, selecting all output in on the screen and then copying it to the clipboard only to paste it back in Excel.

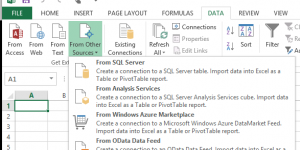

That sounded horrible, but it did connect me to the solution: Excel.

It’s fairly easy to set up “an external datasource”

and connect the Excel sheet to the tables you want..

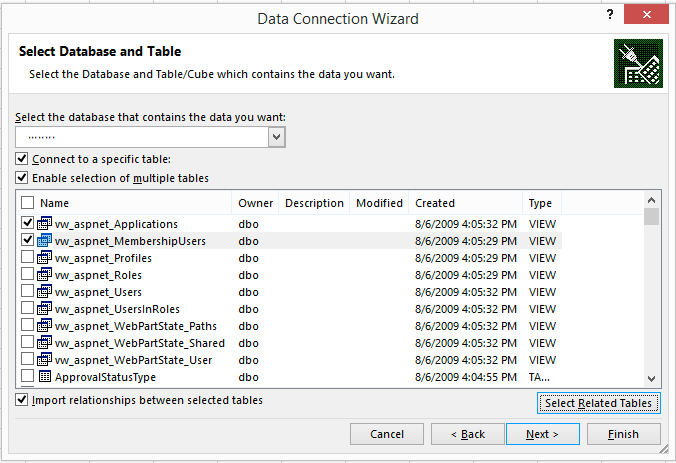

Make sure that you only select the tables you really need, selecting all tables with give you a Sheet per table but no clue on which table it is; the sheet is called Sheet, and does not inherit the table name. Break it up in related batches to keep sanity.

Another irk is that if you do not check the “Import relationships between selected tables” it will drop the Foreign Key columns. You will end up with useless data which cannot be related anymore.

The strategy I developed was to import one “big data” table per batch and include all the related tables via “Select related tables”. That works. Not great but fine.

You might think the “Select related tables” does the automatic good thing for you, well no. Not sure what it does but make sure you examine the output of the command below thoroughly and make sure you check every table in the “Select Database and tables” dialog.

As all sheets will have mysterious Sheet1, Sheet2 etc names. You still need to figure out which sheet contains what data.

An overview of all related tables is quite useful. This query gives that list:

USE DATABASENAME;

GO

SELECT

f.name AS foreign_key_name

,OBJECT_NAME(f.parent_object_id) AS table_name

,COL_NAME(fc.parent_object_id, fc.parent_column_id) AS constraint_column_name

,OBJECT_NAME (f.referenced_object_id) AS referenced_object

,COL_NAME(fc.referenced_object_id, fc.referenced_column_id) AS referenced_column_name

,is_disabled

,delete_referential_action_desc

,update_referential_action_desc

FROM sys.foreign_keys AS f

INNER JOIN sys.foreign_key_columns AS fc

ON f.object_id = fc.constraint_object_id

WHERE f.parent_object_id = OBJECT_ID('dbo.TABLENAME');

Remember to change DATABASENAME and TABLENAME.

It took me quite a few roundtrips before I got the data out in such a way that it was actual useable. Hopefully thanks to these insights I can skip a few in the future. At least there is no BOM in the Excel CSV, the text of all long textfields is actual there and it is sanely between quotes.

Remember; avoid Microsoft; if possible route around it. Otherwise patience is virtue.